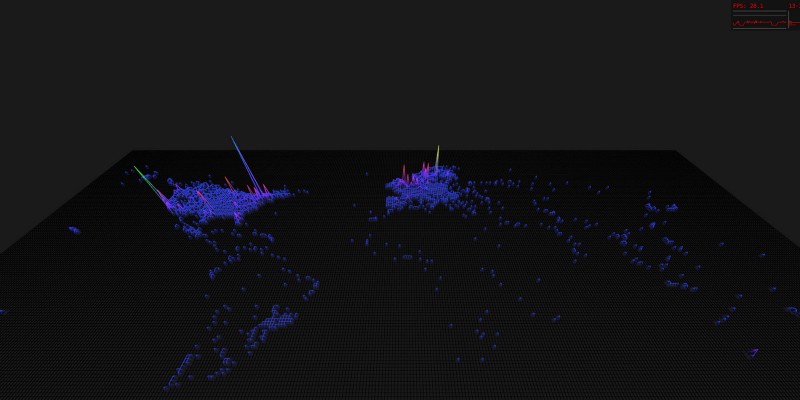

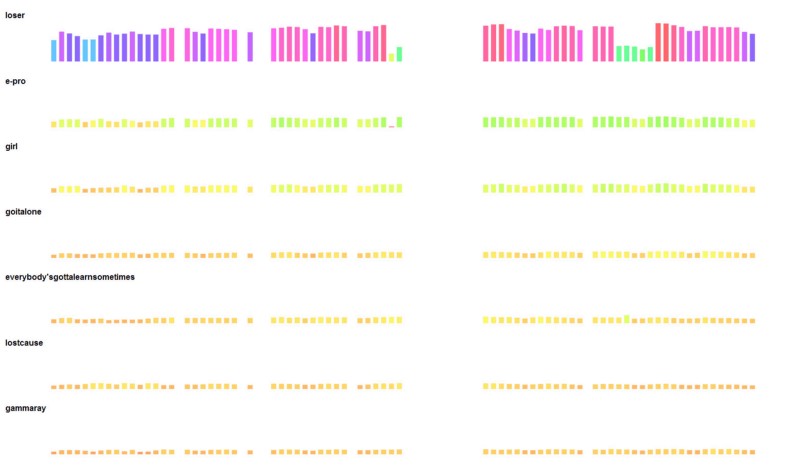

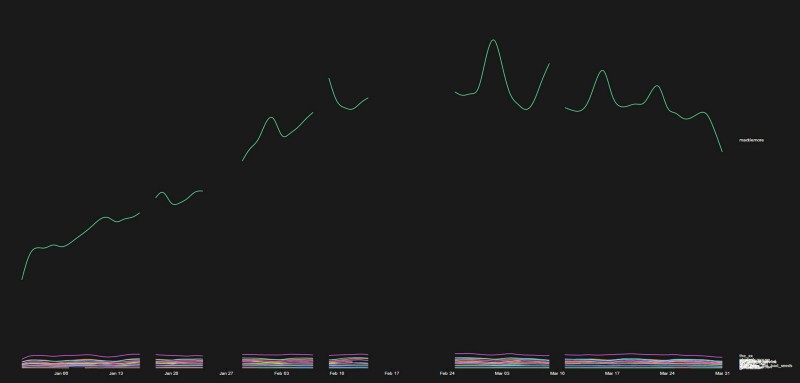

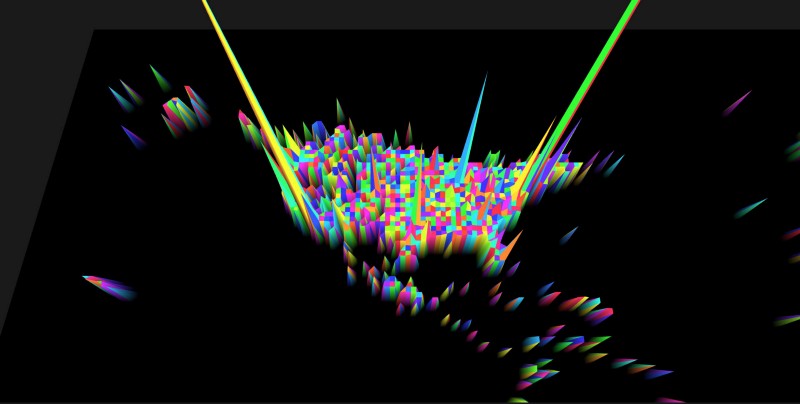

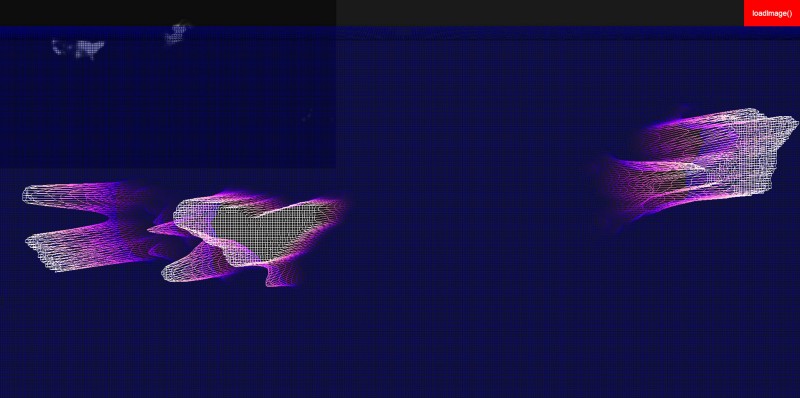

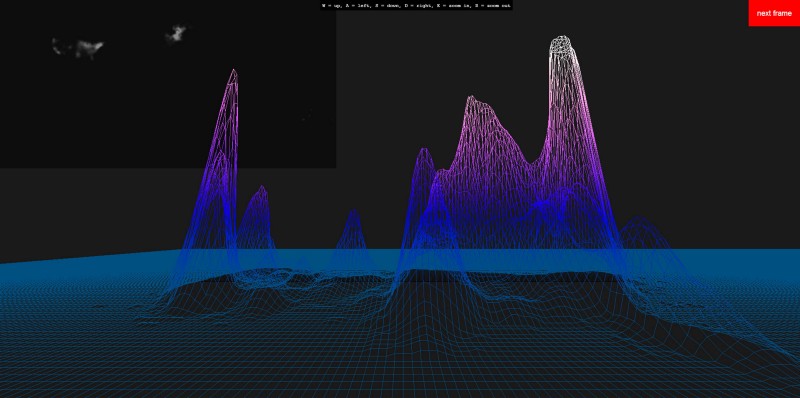

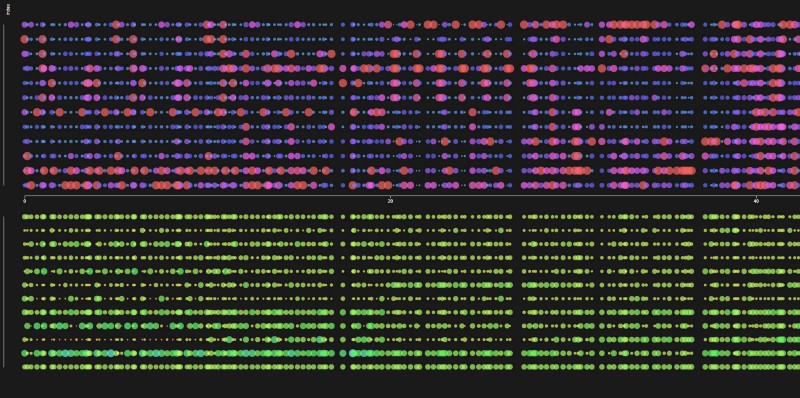

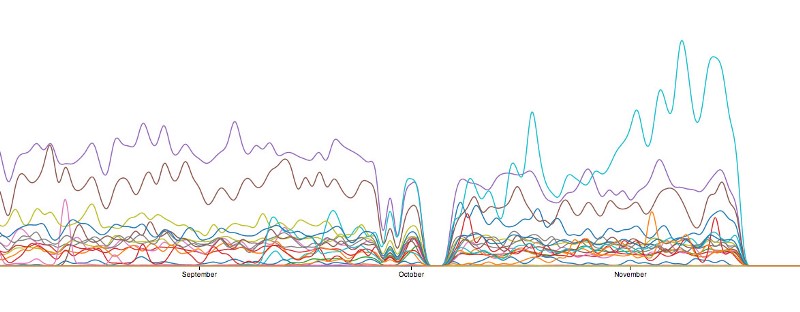

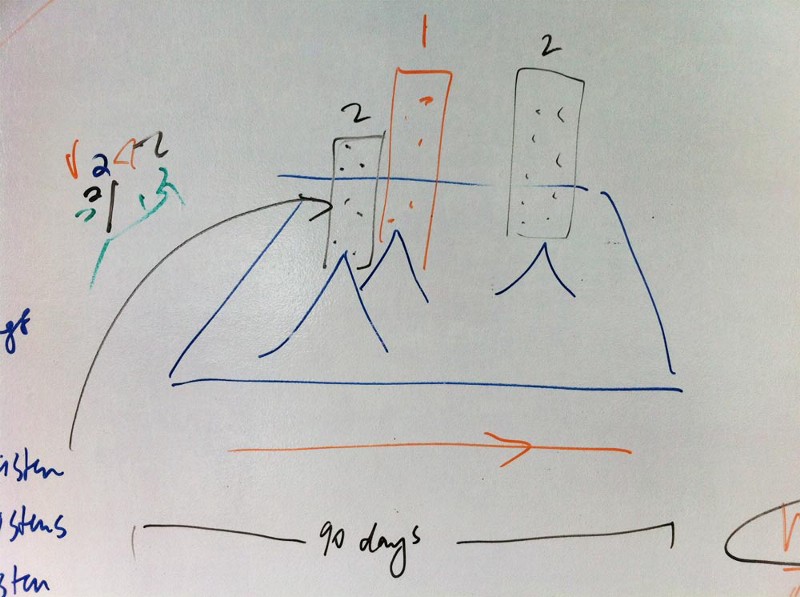

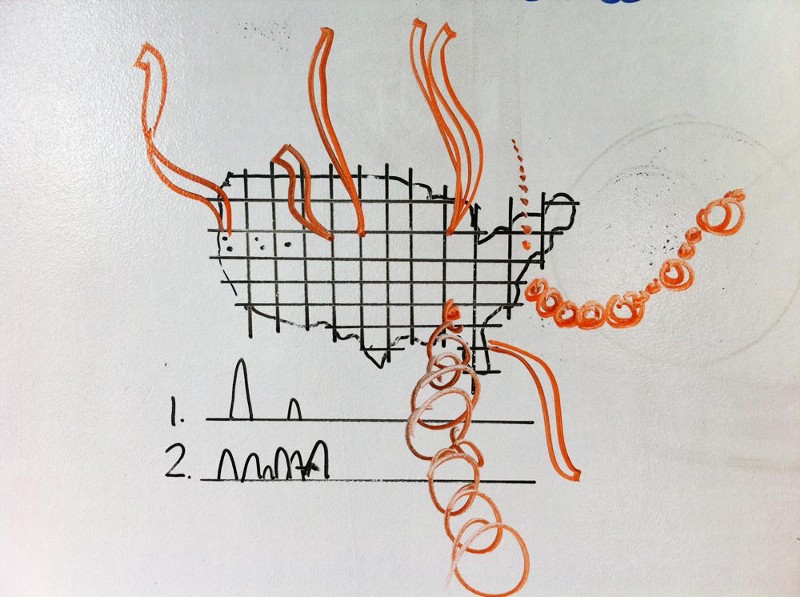

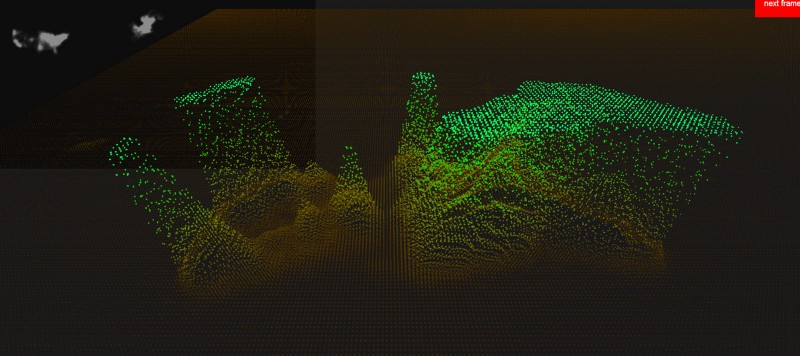

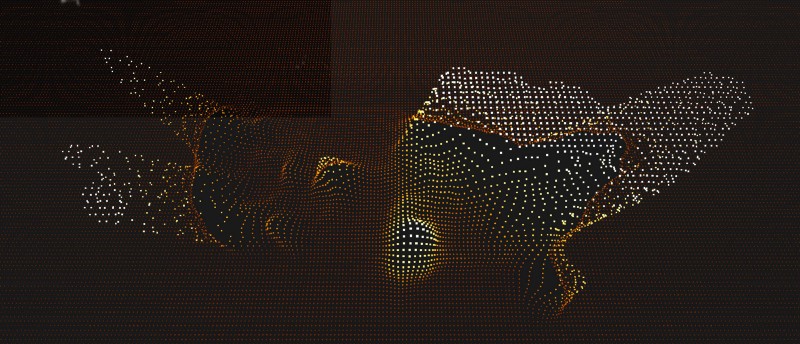

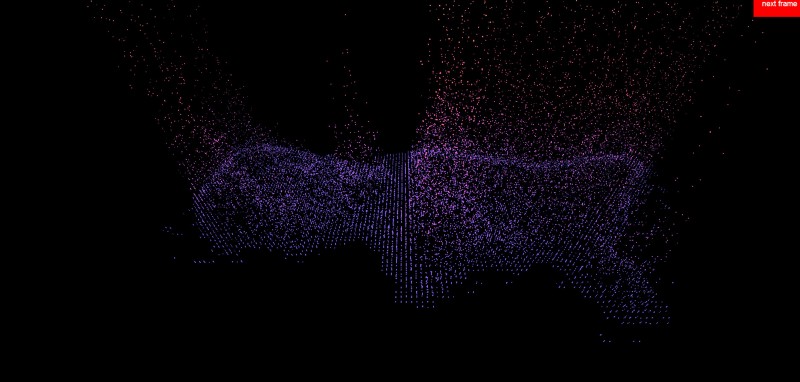

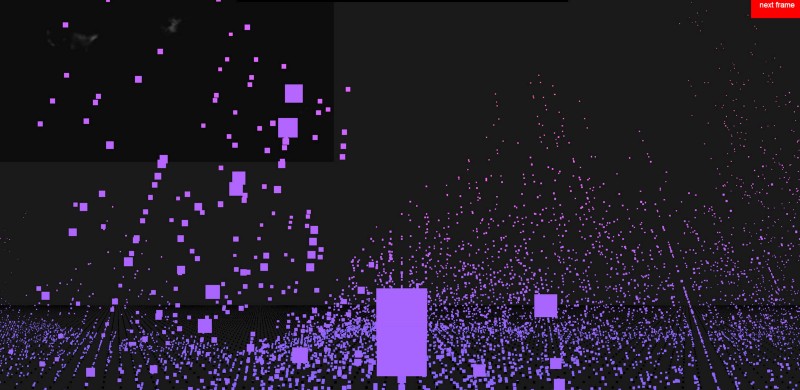

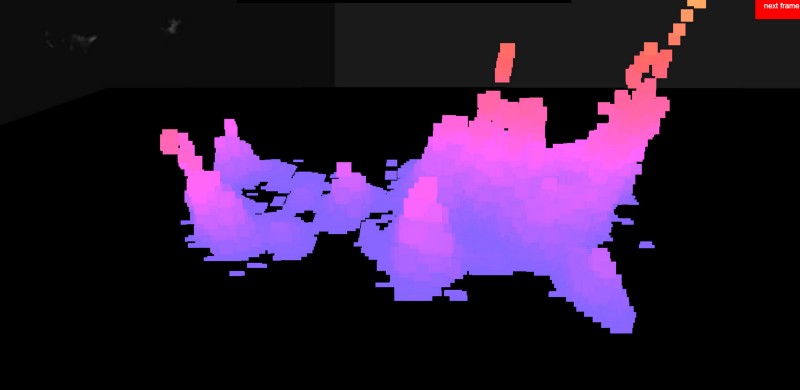

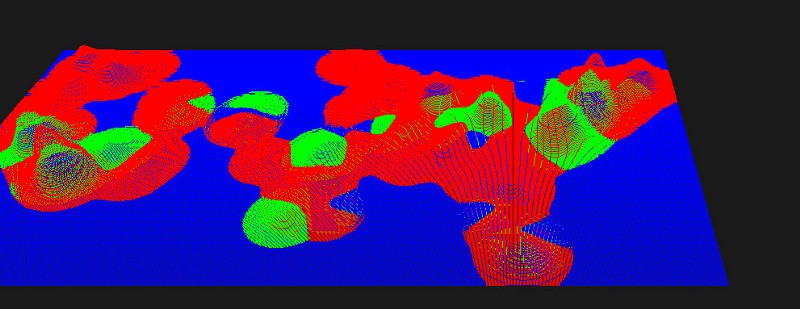

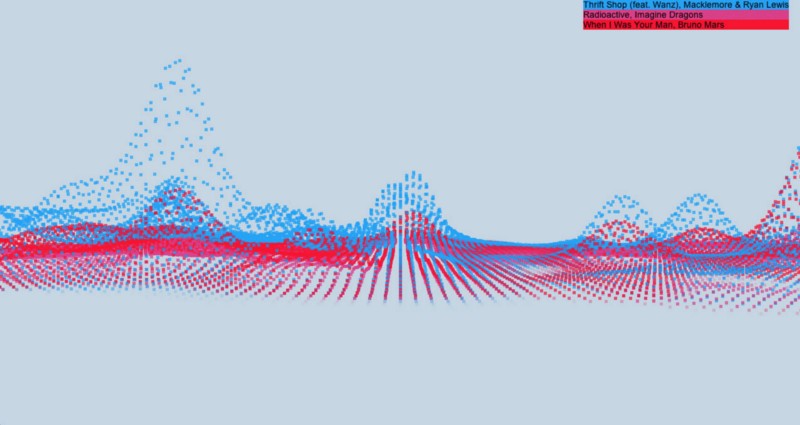

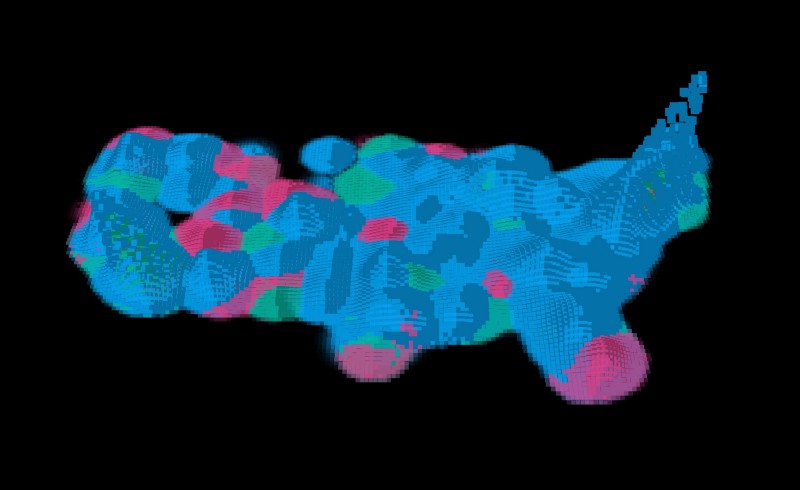

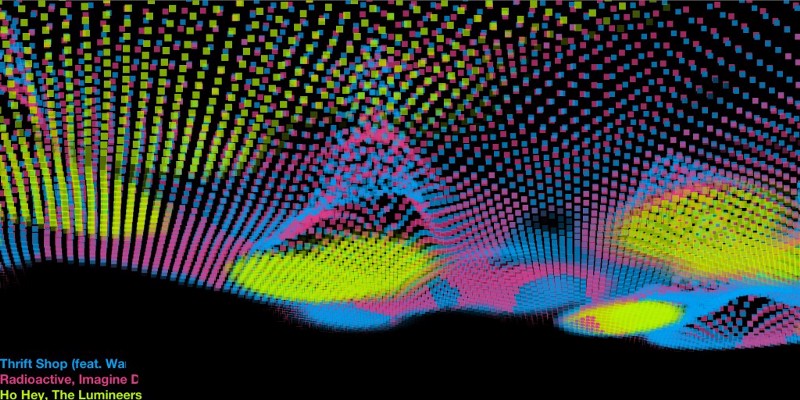

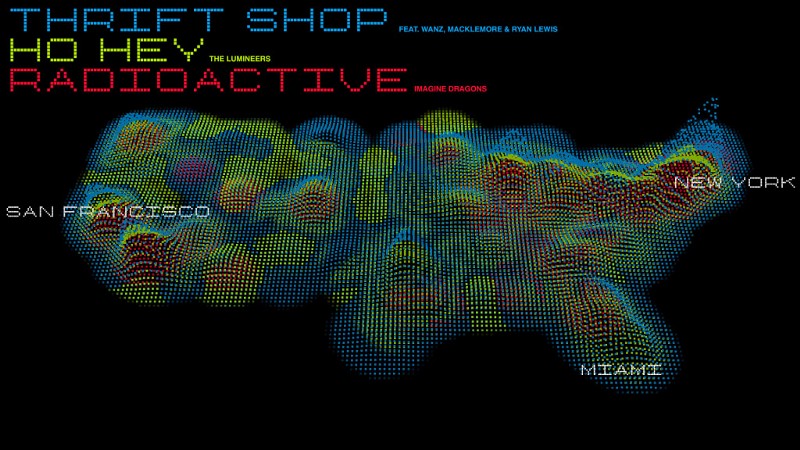

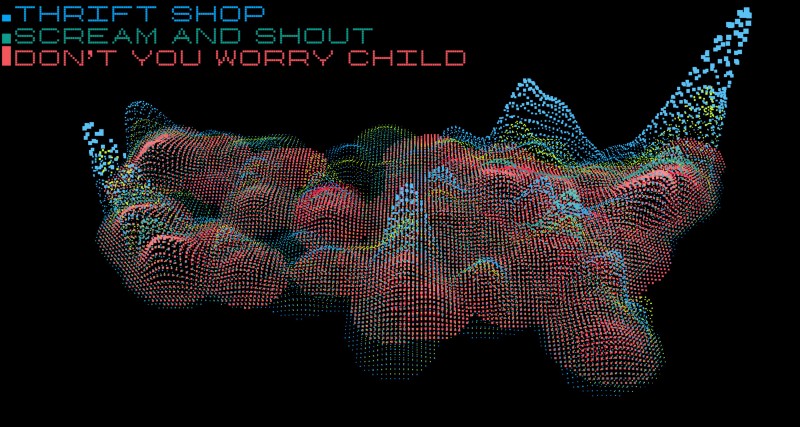

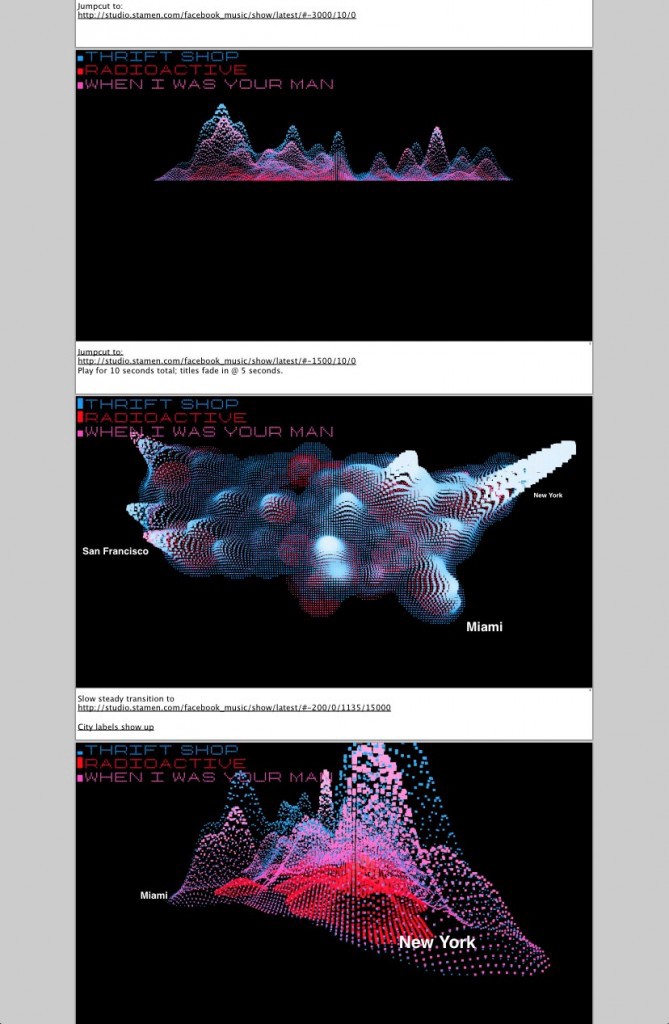

Facebook Stories has just released their third visualization project with us, which we’re affectionately calling “Beatquake.” The project is designed to show listening activity across Facebook over the course of 90 days. We were all surprised how popular Thrift Shop by Macklemore & Ryan Lewis is. It blew out most of the investigative charting we worked on early in the process. Here’s how the project blossomed over the course of four weeks.

You can read about how this piece was made here, see more Facebook/Stamen in-process work here, and a series of posts looking under the hood at how this kind of work gets made here, here and here.